Is Economics Finally Becoming Honest?

That’s the stinging criticism that economist Ed Leamer made in a powerful study in his famous 1983 article “Lets Take the Con Out of Econometrics”. At the time, he said that researchers did not trust other researchers’ estimates because they were sensitive to biases made throughout the research process. But in the decades since Leamer’s criticism, the academic community has tended to take peer-reviewed studies more seriously.

This began to change with Dr. John Ioannidis’s 2005 hit article “Why Most Published Findings Are False”. Concerns grew rapidly about the “replication crisis” of the 2010s, aided by the growth of social media. Psychology was hit first and hardest, starting with the 2011 article “False Positive Psychology”. But economics and all other social sciences were not saved.

A basic premise of science is that research must be replicated. If one scientist does an experiment to measure a physical constant like the speed of light, and they document their research well enough, other scientists should be able to do the same experiment and get the same result. If one lab’s results can’t be replicated elsewhere, such as cold fusion, it’s probably not true.

Outside of a hard science like physics we don’t expect to get the same accuracy. Perhaps one trial finds a drug reduces heart attacks by 17%, and another finds 14%. But for research to usefully inform our actions, it needs to be at least somewhat replicable. If one test finds a drug works but all subsequent tests find it does nothing, people probably shouldn’t take the drug.

Social science research has spent decades producing the equivalent of bogus studies on medicine that turn out to be ineffective or harmful. When a team led by Brian Nosek tried in 2015 to replicate 100 experiments that had been published in top psychology journals, less than half turned up to show statistically significant findings. A Federal Reserve discussion paper issued the same year found similar negative results for published economic papers.

If peer-reviewed studies published in top journals can’t be trusted, what can we trust? As of 2015 some popular answers have been “nothing”, or a combination of common sense and informed prior beliefs. But the scientific changes made in the wake of the replication problem may finally be starting to bear fruit in the form of replicable, reliable research.

The US military was one of many institutions that relied on social science research to guide decision making. When the replication problem led to skepticism about this study, they decided to take action. The Defense Advanced Projects Research Agency, famous for funding breakthroughs in hard technologies like the Internet and self-driving cars, has given Brian Nosek funding and the Center for Open Science to conduct a wide range of research from across the social sciences. The idea was to test both how reliable the research was, and to see if there were any similarities in the types of research that turned out to be more reliable.

The results of this effort have recently been published in a special journal The environment. Hundreds of researchers (of which I was one) from all social sciences have tried to replicate hundreds of claims from papers published in top social science journals. Overall we found that things are getting better from a bad start. For example, many papers do not share the data or codes that supposedly produced their results, but there is a good chance that they could not exceed what they were in 2009, the beginning of the period studied.

Figure 1: Availability of data and code in year of publication

Source: The environment

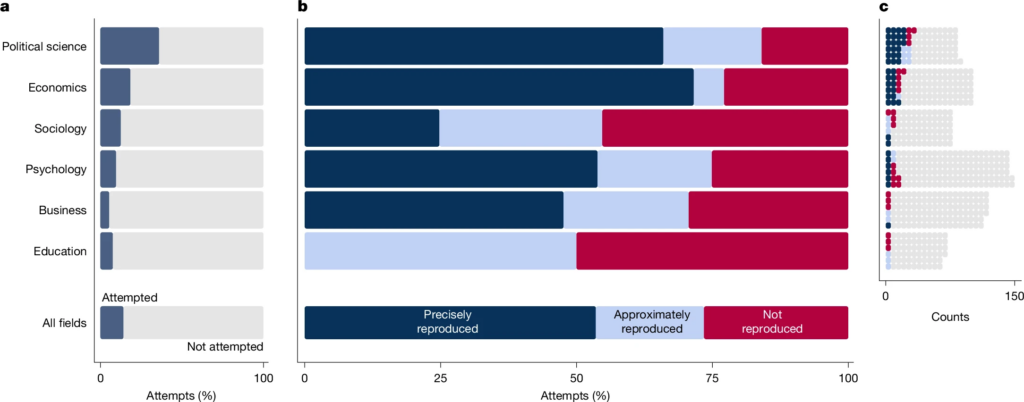

Economics, as well as political science, look particularly good on this scale, with almost half of the articles sharing data or code, compared to less than one in ten articles in the field of Education. Economics similarly had an excellent “reproduction”, with many articles clearing this low bar. Reproducibility refers to the fact that, if other researchers analyze the exact same dataset that the published article says used exactly the same method as the article that they analyzed, they get the exact same result. Economics papers produced exactly the same result 67% of the time, the highest rate of any other field studied.

Figure 2: Reproduction in the Field

Source: The environment

I call this a low bar because it simply means that the first researchers wrote what they did so well that others could copy it, not that what they found was right (on the contrary, if they didn’t write things well enough for others to copy, it wouldn’t mean they were wrong). How do we know if he was right?

Other papers from The environment out to assess how sensitive the results are to adjustments in analytical methods. If there are several valid methods of data analysis, do real researchers happen (by coincidence or cherry-picking) to choose the only one that gives statistically significant results? Or can multiple rational approaches reach the same conclusion?

Here most of the papers can be called “correct in a straightforward manner”. In attempts to test their robustness, 74% found statistically significant results in the same direction as the original, but only 34% found an effect size very close to the original.

When trying to replicate the claims on new data sets (not just using new methods with existing data), only half found statistically significant results in the same way as the original, and the results found were less than half as large as the original.

Overall this suggests that published social science research tends to exaggerate the size of effects, and often looks for effects that may not exist. This is not ideal, but relying on research is still better than chance. For example, the robustness test found significant results in the opposite direction as the first paper only 2% of the time.

What does all this mean for research buyers? It’s always a good idea to trust whole books over single papers. In economics, the Journal of Economic Perspectives does an excellent job of summarizing areas of research in an accessible way.

As a new quick rule of thumb inspired by The environment on paper, you could do worse than “cut the estimated effect sizes in half”. If a published paper says that a college degree increases wages by 100%, then the odds that the degree does indeed increase wages, are more like 40-50%. In 2005, John Ioannidis stated that “most published research results are false”. By 2026, we seem to have progressed to “many of the published research findings are exaggerated.”

(0 COMMENTS)